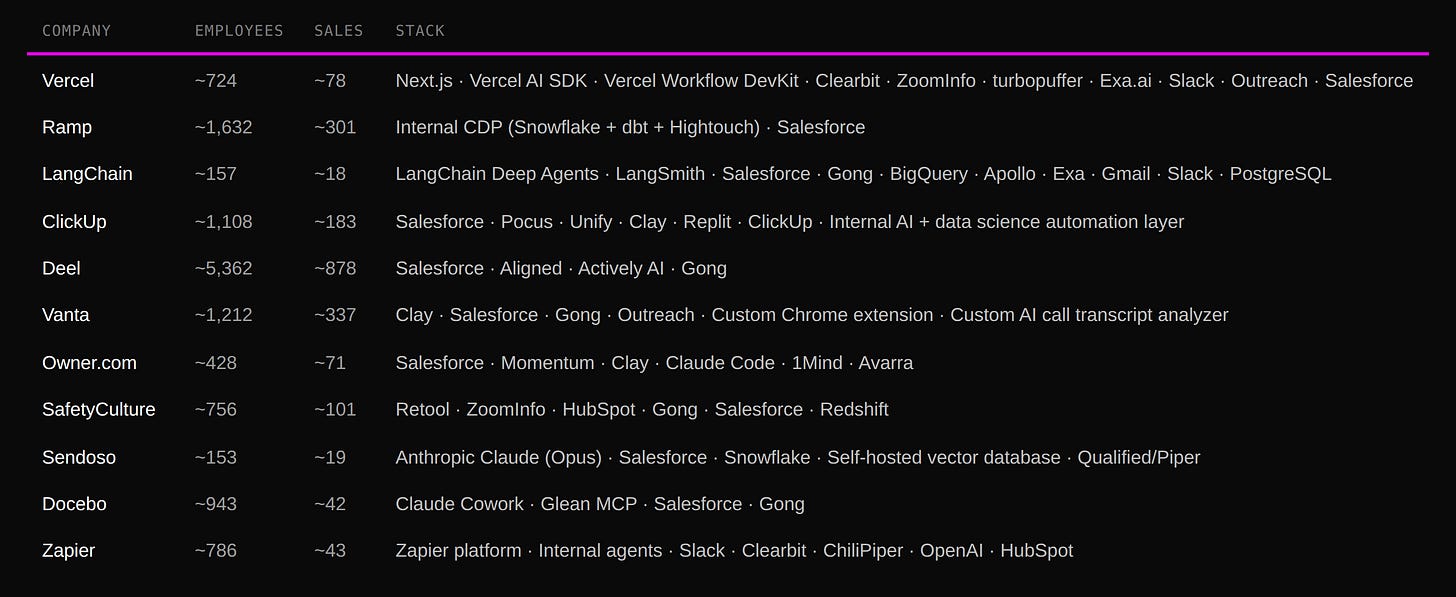

9 Lessons From 11 Growth-Stage Companies That Built GTM Agents In-House

Vercel, Ramp, LangChain, ClickUp, Deel, Vanta & others: what they built, their tech stacks, and what we can learn from them

If you were forwarded this newsletter, join 6,688 weekly readers—some of the smartest GTM founders and operators—by subscribing here:

Thanks for reading this hand-rolled, 100% organic, farm-to-table (human-written) newsletter.

Presented by Clay.

Bring AI agents, enrichment, and intent data together and turn insights into relevant, timely action. Start building for free.

& Supported by:

Attention → AI Agents for RevOps

Clarify → The Autonomous CRM

Nooks → Agent Workspace for Intelligent Outbound

Sumble → Buying signals from tech stacks, people data, & active projects

11x.ai → Accelerate growth with a digital GTM workforce

Hey, y’all! 👋

It’s hard to open LinkedIn or X without seeing a “we replaced our entire {sales team} with Claude Code” post these days. But, what I’ve noticed: most of those posts are by some dude who runs a 4-person agency.

So I started collecting growth-stage (ie: “at-scale” companies with large sales teams—dozens or hundreds of sellers) who have deployed AI agents into their (complex) go-to-market orgs. Larger teams means higher stakes and more complexity across: people, teams, and (maybe most importantly) data systems.

I have curated 11 examples of teams that have built and deployed AI agents in-house. They spent months thinking through edge cases, data architecture, and production reliability. Wrote custom code, connected multiple tools via API or MCP, shipped it to production, put it in front of their sales teams, and (again, maybe most importantly) got positive outcomes.

Vercel went from 10 inbound SDRs to 1.

LangChain saw a 250% increase in lead-to-qualified-opportunity conversion.

Ramp has over 80% of sales workflows running through their internal platform.

The proof is in the pudding (as they say).

Alright, let’s get into it.

What they built

LangChain → GTM Agent with Memory and Account Intelligence

This is the most technically detailed example I found. Published just last week by Vishnu Suresh and Jess Ou on the LangChain blog.

Before the agent, every outbound started the same way: a rep toggling between Salesforce for the account record, Gong for call history, LinkedIn for the contact, and the prospect’s website for context. Fifteen minutes of research before a single word was written.

Their agent triggers on new Salesforce leads, checks whether anyone has already reached out, gathers context (including full meeting history from Gong), and sends a Slack draft with reasoning and sources for the rep to approve. Nothing goes out without explicit human review.

Two things make this one stand out:

First, the memory system. When a rep edits a draft in Slack, the system compares the original against the revised version. If the changes are substantive, an LLM analyzes the diff and extracts structured style observations—what changed, what it implies about the rep’s preferences. Those observations get stored per rep, and every future run reads them before drafting. Each edit teaches the agent. A weekly cron job compacts these memories to prevent bloat. That’s a genuine learning loop, not just prompt tuning.

Second, account intelligence. Every Monday morning, the agent pulls data from Salesforce and BigQuery, checks the outside world for funding rounds, product launches, and AI initiatives, and generates account-level reports. For sales, it surfaces expansion opportunities and competitive moves. For their deployed engineering team, it flags account health—usage trends, upcoming renewals, customers running low on credits. Two audiences, two different reports, same underlying data.

Results: 250% increase in lead-to-qualified-opportunity conversion from December 2025 to March 2026. 3x more pipeline dollars. Reps reclaimed 40 hours per month each. 50% daily active usage and 86% weekly active usage across the sales team. Reps increased follow-up with lower-intent leads by 97%.

ClickUp → 2 People Doing the Work of 40 SDRs

Kyle Coleman (Global VP Marketing) shared on LinkedIn that ClickUp has built an internal AI SDR program they call “programmatic pipegen.” The system runs alongside ~30 human XDRs, monitoring over 100 signals across ClickUp’s PLG user base (using Pocus) and automating outreach for speed-to-lead. Human XDRs handle phone calls and strategic account work. The AI SDR feeds them pipeline.

The entire AI SDR motion is orchestrated by just 2 people. Kyle estimates it would take ~40 SDRs to operate at the same scale without the AI, data science, and automations they built. The human XDRs and AI SDR operate in concert, not competition (5 XDRs were promoted to AE the same week Kyle posted about this).

Deel → AI-Augmented Sales Workflow Across 7 Use Cases

Mike Gallardo (Sales Director) shared on LinkedIn that Deel built an AI-augmented sales workflow covering 7 core use cases:

AI-generated first touches analyzing role, company, funding, and web activity.

Discovery prep surfacing pain points and prior conversations.

Call debriefs summarizing decision criteria and power structures.

Objection handling predicting legal, security, and procurement pushback.

Real-time competitive battle cards.

24/7 AI-powered customer Q&A.

Deal strategy alerts when new stakeholders engage or risk builds.

Results: 90% of the team hit quota in Q4.

Vercel → Inbound Lead Agent that replaced 10 SDRs with 1 (and open-sourced it)

Drew Bredvick leads Vercel’s GTM engineering team. Here’s what their agent built:

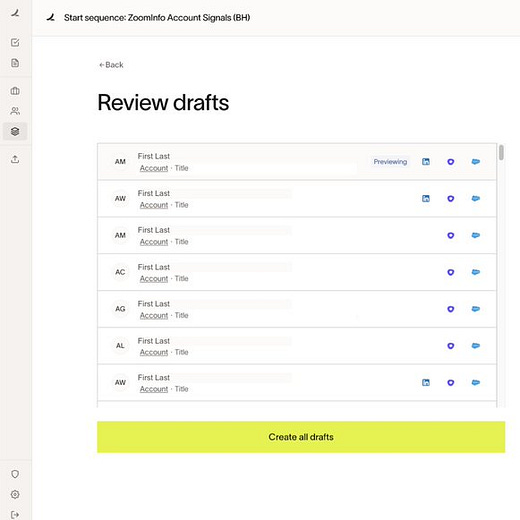

Form submission → enrichment (Clearbit, ZoomInfo, etc.)

Lead Agent kicks off → deep research on the company/person

AI makes a qualification decision → categorizes into buckets (qualified, follow-up, support, not sales-related)

Slack notification → pings the SDR with full context + reasoning

Draft email ready → for qualified leads, we’ve already written the response and pushed it to Outreach

The human’s job went from “do research, qualify, write email, send” to “review AI’s work, press send.”

It’s like we gave them the controller to a video game instead of making them play the boring parts.

They open-sourced the architecture (here).

They also built a retrieval layer on turbopuffer—indexing every Gong call, Slack thread, and email chain into a vector search database, organized by Salesforce account. The agent queries that account-specific history at runtime so it has real context, not just whatever fits in a prompt.

Beyond inbound, Vercel has since built agents for close/loss analysis (reads Gong calls and extracts why deals were actually lost—not the seller’s excuse), objection extraction (pulls objections from sales calls and routes product gaps to engineering teams), and field feedback aggregation.

ROI from the agent: 32x

Cost: ~$60K/year (engineering hours + AI API costs)

Savings: $2M+/year (headcount reduction + efficiency gains)

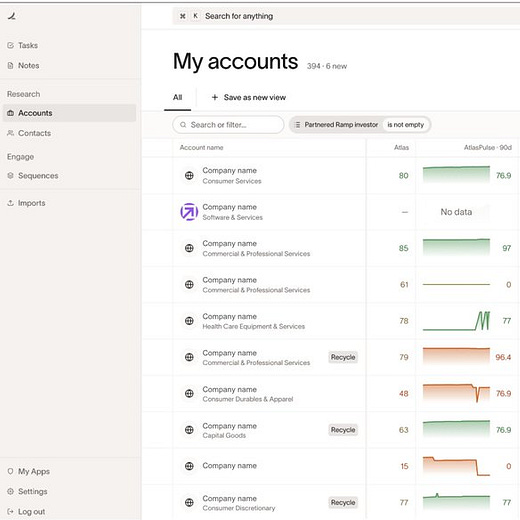

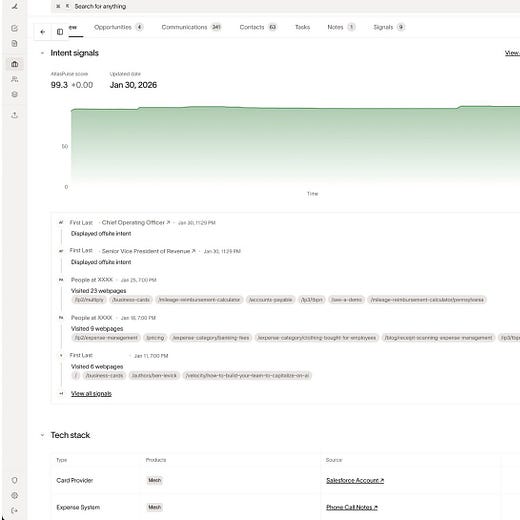

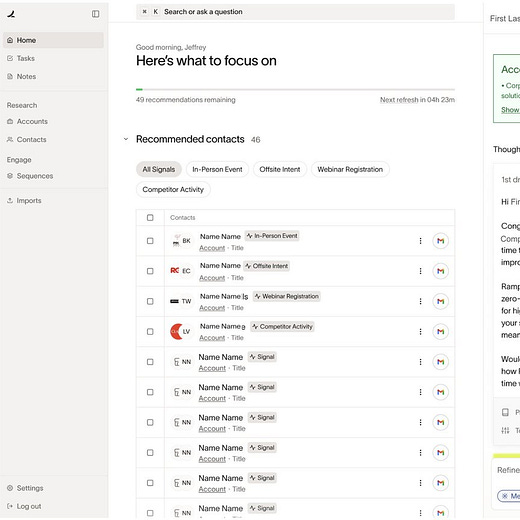

Ramp → “Ramp Revenue” Internal GTM Platform

Parth Gujare (Senior Product Manager) shared on X that Ramp has been building its own internal revenue stack they call “Ramp Revenue.” The mandate was to help the sales team drive more pipeline and build the most efficient GTM org in the world.

It’s powered by a customer data platform that processes millions of records daily (including internal data, external data, and CRM data) plus a unified action layer with agents embedded directly in seller workflows. Sellers don’t toggle between a dozen systems to figure out who to reach out to or what to say. The agent surfaces it.

Over 80% of sales workflows are now powered by Ramp Revenue.

Worth noting: Ramp also built an internal coding agent called Inspect that now accounts for roughly 30% of merged engineering pull requests. This is a company that builds by default. When they looked at the GTM vendor landscape, they decided they could do it better themselves. That’s a bet most companies can’t make, but for Ramp, it’s consistent with how they operate.

Vanta → 3 AI Workflows Shipped in a Single Day

During a Clay<>Vanta AI Hackathon, the team built and deployed three AI-powered GTM workflows in approximately 8 hours:

AI-powered trust center mockups tailored to each prospect’s brand for outbound personalization

A Chrome extension automating manual SDR research—gave 70+ new SDR hires the power of Clay without full tool onboarding, saving multiple hours per day

An AI call transcript analyzer that transforms hours of conversation into actionable insights flowing into custom presentation decks

All 3 workflows were presented to the entire company the same day. The SDR research Chrome extension now serves 70+ new hires.

A few more examples

Owner.com’s Kyle Norton (CRO) manages a 100+ person sales team with AI agents embedded across workflows. He trained his first agent himself, every single day for a month. Reviewing outputs each morning, finding what’s wrong, refining. His take: agents require the same onboarding you’d give a new hire. The first 30 days are daily training. The first 1,000 outputs need manual review. Norton has also said their AI agents are now better than a mid-pack AE or SDR. Not better than the best, but better than the 50th percentile. (More on Kyle’s thought process here: Learn, Build, Scale: A Revenue Leader’s AI Transformation Roadmap.)

SafetyCulture built four AI agents across their GTM workflow using Retool, ZoomInfo, HubSpot, Gong, Salesforce, and Redshift: AI-powered lead enrichment calling 5 data providers in parallel, an Auto BDR for personalized outreach and meeting booking, an AI lifecycle personalizer for feature recommendations, and a custom app layer replacing clunky GTM systems with a single-pane-of-glass view. Results: 2x increase in opportunities created. 3x increase in meeting booking rates. BDRs save 30 minutes per opportunity. 80%+ of the team uses the custom app daily. (Profiled by Kyle Poyar in Growth Unhinged.)

Sendoso built 3 custom agents using Anthropic’s framework: a contract scraper that OCRs closed-won PDFs and feeds them to Claude, a deep research engine with a self-hosted vector database pulling from Snowflake product-usage data, and an AI proposal generator. They went from 15+ BDRs to 4 BDRs generating 30%+ more pipeline than the original 15 produced. Their key insight: nobody else has their proprietary product-usage and contract data, so external AI SDR tools can’t replicate what they built.

Docebo’s Emily Pick (Product Marketing) used Claude Cowork to rebuild Docebo’s ICP from scratch over a weekend. She fed it two SFDC reports (all customers by ARR, plus all closed-won and closed-lost opps from Q4 with win-loss notes), a local folder of win-loss interview transcripts from H2 2025, and 25 account briefs generated through the Glean MCP pulling from Salesforce, Gong, CRM notes, emails, and call recordings. The output: two fully defined ICPs with complete attribute sets, the data case for each, deal triggers, qualification criteria, competitive landscape, and an activation guide.

Zapier now runs more AI agents than it has employees (800+ agents across the company). Wade Foster (CEO) shared his top agents by department on LinkedIn. The sales-specific ones include: automatic call prep, an outbound assistant, sales chat attribution tracking, a Gmail auto-replier, and a smart lead qualifier.

The patterns across these builds

After going through all of these examples (plus a handful of others I researched but didn’t include), here are nine lessons worth calling out.

1. The data work is the actual project

Every team that successfully deployed an agent had to solve a data problem first. Not a model problem. Not a prompt engineering problem. A data problem. I have talked about this before.

Drew Bredvick’s team at Vercel tried the obvious approach first—stuff all the context into the prompt. Gong transcripts, Slack messages, Salesforce records. It overflowed context windows almost immediately. So they built a retrieval layer on turbopuffer, indexing their entire GTM corpus per Salesforce account. That architecture is the reason the agent works.

Ramp built an entire internal CDP that processes millions of records daily before the agents could sit on top of it.

LangChain connected their agent to Salesforce, Gong, BigQuery, Apollo, Gmail, LinkedIn, and Exa for web research. The first step before any code was defining what data sources the agent needed and how they’d be connected.

The lesson: if your CRM is a mess, your enrichment data is stale, and your conversation intelligence isn’t connected to anything, you’re not ready for agents. You need to start with a data cleanup project.

2. “Shadow your best rep” is the new requirements doc

Drew Bredvick said something in his writeup that I think is the single most important line: before writing any code, he spent a week watching Vercel’s top-performing SDR.

What signals made her excited about a lead? What made her immediately disqualify? How did she research companies? What did she include in emails that got responses?

He called it the “revealed preference” approach—watch what the best people actually do, not what the playbook says they should do.

LangChain took a similar approach but formalized it differently. Before writing production code for any workflow, they define what success looks like and build a small scenario library grounded in situations their reps actually face. They expand that library as the agent matures. By the time something ships, they have an eval suite that catches regressions and runs in CI automatically.

Kyle Norton at Owner.com echoed this from the CRO seat. He trained his first agent himself. Every single day for a month. His take: if you haven’t personally trained an agent, you have no idea what you’re talking about. The CRO or whoever owns the motion needs to be the one doing the training, at least at first.

The pattern is consistent. The agent doesn’t invent the motion. It scales what’s already working. If you don’t have a winning playbook worth cloning, you don’t have an agent project.

3. Every team had someone who owned the project end-to-end

Parth Gujare at Ramp is a Senior Product Manager with a CS degree and an MBA from Wharton. He’s a technical PM who thinks in systems.

LangChain’s agent was built by Vishnu Suresh and Jess Ou—engineers embedded in the GTM org.

Kyle Norton at Owner.com is a CRO who personally trained the first agent daily. Hands-on involvement, not just exec sponsorship.

Drew Bredvick is a GTM Engineer at Vercel. He writes code. He built the agent architecture in Next.js, set up the vector search infrastructure, and wired the Slack integration for human-in-the-loop approval.

Emily Pick at Docebo is a product marketer who connected SFDC, Gong, and Glean through Cowork—no code, no engineering team involved.

In every case, there was someone who took ownership of the full loop: understanding the workflow, connecting the data, building the agent, and iterating with the team. Sometimes that person is a GTM Engineer who writes code. Sometimes it’s a PM who thinks in systems. Sometimes it’s a marketer or operator who knows how to connect tools and feed them the right data. The common thread isn’t a specific job title, but someone willing to get their hands dirty and iterate daily.

4. The most successful programs are led by tiny teams

ClickUp’s entire AI SDR motion is run by 2 people, doing the work of 40 SDRs. Vercel’s GTM engineering team is small. Sendoso cut from 15+ BDRs to 4 while generating 30% more pipeline. When I was running the auto-SDR function at Apollo, it was just me booking 1,800 meetings a quarter across 20+ reps.

These aren’t massive engineering projects with 20-person teams and 6-month timelines. They’re small, scrappy GTM engineering efforts. Usually 2–4 people assembling tools, writing prompts, connecting data sources, and iterating daily with the sales team.

5. The tech stack is remarkably consistent

Across every example I looked at, the building blocks are similar: Salesforce as the CRM and system of record. Gong or Attention for conversation intelligence. Some combination of Clay, Clearbit, ZoomInfo, or Apollo for enrichment. Slack for human-in-the-loop approval flows. An LLM (usually Anthropic or OpenAI) for reasoning and generation. And an orchestration layer—whether that’s Clay, a custom build (Vercel, Ramp), a framework (LangChain Deep Agents), or a workflow tool (Zapier, Retool).

Nobody is building exotic AI infrastructure from scratch. They’re assembling agents from existing building blocks with good engineering and thoughtful product thinking. The barrier to building useful agents is lower than most people think.

6. The biggest differentiator is proprietary data

The companies seeing some of the best results (eg: LangChain’s 86% weekly active usage across their sales team, ClickUp’s 2-person team replacing 40 SDRs, Deel’s 90% quota attainment) are feeding agents internal data that no third-party tool can replicate.

Ramp’s Parth Gujare made this point implicitly with “Ramp Revenue”: the platform processes millions of internal, external, and CRM records. That’s the moat. Not the model. Not the prompt. The data only they have.

7. Most of these agents are doing the boring parts of sales

LangChain’s agent: lead research → context gathering → personalized draft → Slack approval. Ramp’s agents: surface who to reach out to and what to say. Deel’s agents: research, prep, competitive intel, call debriefs. Vercel’s lead agent: form intake → enrichment → qualification → routing → email drafting. The human reviews and presses send.

All hugely valuable. Vercel saved $2M+. LangChain’s reps reclaimed 40 hours per month each. But these agents aren’t closing deals. They’re not running discovery. They’re not navigating complex enterprise buying committees. They’re doing the tedious, repetitive, high-volume work that SDRs and AEs spent most of their time on (and that most reps didn’t want to do anyway).

Even the more advanced use cases, like Vercel’s close/loss analysis, LangChain’s Monday morning account intelligence reports, or Deel’s deal strategy alerts, are pattern matching and summarization tasks. Important tasks that weren’t getting done before. But not the creative, strategic, interpersonal work that makes a great AE great.

This is less “AI is eating sales” and more “AI is eating the admin tax on sales.” That framing is more accurate imho. And more useful if you’re deciding where to start.

8. The build itself isn’t technically novel, but shipping to production is hard

I almost didn’t include this one because I don’t want to take anything away from what these teams built. Shipping to production at scale is genuinely hard. The results are real.

But if you look at what the agents are doing under the hood (form processing, data enrichment, LLM-based qualification, structured output, RAG over internal data, email generation) none of it is cutting-edge AI research. These are well-understood patterns stitched together with good engineering and thoughtful product thinking.

What made these projects successful wasn’t technical novelty. It was: access to clean, connected data. Deep understanding of the sales workflow being automated. Someone bridging the gap between AI capabilities and the GTM team’s needs. And organizational willingness to iterate daily.

LangChain’s memory system, where the agent learns from each rep’s edits, is probably the most sophisticated pattern in the group. But even that is a straightforward feedback loop: store the diff, extract style preferences, inject them into future runs.

Technical hurdles have come down. Now, the hurdles to get over are coordination and execution.

9. The build vs. buy calculus has changed

The calculus for the age-old question of build vs. buy has changed because building is dramatically faster, cheaper, and more accessible than it was a few years ago (even six months ago!).

Vercel’s lead agent was built in a weekend. The $2M+ in savings came from deploying it. LangChain’s agent went from concept to 50% daily active usage in a few months. Vanta shipped 3 production workflows in a single hackathon (with support from Clay).

The question isn’t “should you build or buy?” The question is: do you have someone willing to own this end-to-end, and do you have the data infrastructure to support what they build?

Both are valid paths. Ramp and Vercel built because they have engineering cultures that expect it. Your company might get the same results from chaining Clay + an LLM + Slack + your CRM with a GTM Engineer who knows what they’re doing. Or a product marketer who knows what data she needs and how to connect it.

What’s changed is that the floor for building has dropped so far that the build option is now accessible to companies that never would have considered it before. A Series B with a single GTM Engineer can build and deploy a lead qualification agent in weeks, not quarters. A product marketer with Cowork can rebuild an ICP over a weekend.

My bet: within 18 months, “we built our own” will be as common as “we use Outreach” is today. You can

The teams that start now (even with something basic) will have a meaningful head start on everyone else.

Company table

That’s it for this week.

As always, thank you for your attention and trust. I do not take it for granted.

See you next time,

Brendan 🫡

Thanks Brendan. Awesome content - loved the detail of what has been built and the underlying systems to enable them at the winning GTM teams.